Smart Pipelines Orchestration: Designing Predictable Data Platforms on Shared Spark

February 8, 2026How to Find and Remove Application Owners from Disabled Applications

February 9, 2026The Reality Behind the Hype

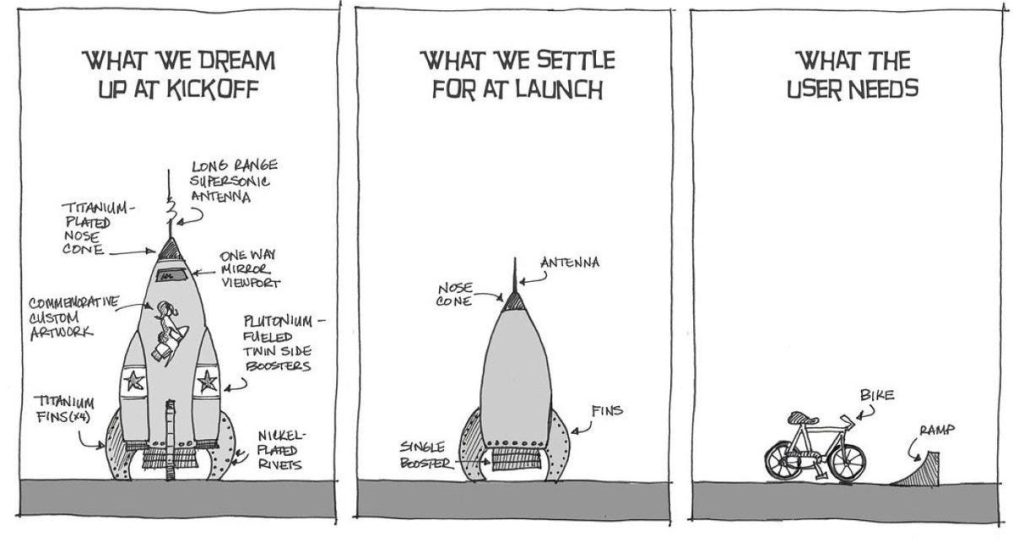

In “Innovation Starts With Understanding,” I wrote that understanding the domain, data, and delivery discipline beats hype. The core message was clear: make technology serve strategy with fundamentals that compound over time.

Today, I want to validate that approach with hard evidence from production AI deployments. A recent comprehensive study, “Measuring Agents in Production” by researchers from multiple institutions, surveyed 306 practitioners and conducted 20 detailed case studies across 26 industries.

AI agents that work in production look very different from what research papers and vendor demos show. For enterprise and solution architects, this research gives practical guidance we actually need.

Let’s have a look at what’s actually working in the real world according to this study.

- Finding #1: Simplicity Wins Over Sophistication

- Finding #2: Prompting Beats Fine-Tuning (70% of the Time)

- Finding #3: Productivity Is the Primary Value Driver

- Finding #4: Human Evaluation Remains Essential

- Finding #5: Reliability Is the Top Challenge

- Finding #6: Internal Employees Are the Primary Users

- Finding #7: Custom Frameworks Over Third-Party Tools

- What We’ve Learned

Finding #1: Simplicity Wins Over Sophistication

The Data: Production agents execute at most 10 steps before requiring human intervention in 68% of cases.

Ten steps. Not the hundred-step autonomous reasoning chains shown in demos. Not the “fully autonomous” systems promised in marketing slides.

What This Means for Enterprise Architects:

When designing agents, you need to limit autonomy on purpose. Simple, well-defined workflows deliver more reliable value than open-ended systems that fail in random ways.

Think about your current automation projects. How many steps do they go through before needing validation? If you’re designing agents that run for 50, 100, or unlimited steps, you’re building systems that production data suggests will fail.

The Architectural Principle:

Design for controlled delegation, not full automation. Your architecture should explicitly define:

- Maximum reasoning steps before escalation (this study suggested around 10)

- Clear handoff points to human reviewers

- Well-scoped action boundaries

- Measurable success criteria at each step

Finding #2: Prompting Beats Fine-Tuning (70% of the Time)

The Data: 70% of production agents rely solely on prompting off-the-shelf models without supervised fine-tuning or reinforcement learning.

This finding challenges the narrative that every serious AI deployment requires custom models. Teams prioritise control, maintainability, and iteration speed over model customisation.

What This Means for Architects:

Do not fine-tune unless you have a strong, data-backed reason. The effort to maintain custom models rarely pays off in real production systems.

Think about it: Fine-tuning means:

- Collecting thousands of training examples

- Managing model training infrastructure

- Retraining when underlying models update

- Testing across model versions

- Explaining to security why you’re hosting custom models

Meanwhile, prompting means:

- Write prompt in your IDE

- Test against scenarios

- Version control as text

- Deploy by updating configuration

- Iterate in minutes, not days

The Architectural Principle:

Treat prompting as your primary configuration mechanism. Only escalate to fine-tuning when:

- You have substantial domain-specific data (10,000+ examples)

- The use case requires specialised terminology or behaviour

- You’ve exhausted prompt engineering approaches

- The business case justifies ongoing model maintenance

For the 30% of cases where fine-tuning adds value, the research identified scenarios with highly specific corporate contexts, like customer support agents with deep product knowledge or legal contract analysis with firm-specific precedents.

Finding #3: Productivity Is the Primary Value Driver

The Data: 73% of practitioners deploy agents primarily to increase efficiency and decrease time spent on manual tasks.

Not “enabling novel technology” (33.3%). Not “risk mitigation” (12.1%). Not “digital transformation.”

Time savings are measurable productivity gains.

What This Means for Architects:

Describe every agent initiative in terms of clear time savings and efficiency. Vague promises of innovation won’t secure executive buy-in or justify ROI.

I see too many architecture proposals that lead with “AI-powered” and “cutting-edge.” The successful deployments have clear ROI: “saves 15 hours per week” and “reduces processing time by 60%.”

The Architectural Principle:

Every agent deployment should answer these questions before implementation:

- What manual task is being eliminated or accelerated?

- How much time does this task currently require?

- What is the expected time reduction?

- How will we measure actual time savings post-deployment?

Real-World Example:

Bad pitch: “We should deploy AI agents to transform our customer service operations and position ourselves as innovation leaders.”

Good pitch: “Our tier-1 support team spends 12 hours per day on password reset requests that follow standard procedures. An agent can automate 80% of these, freeing up 9.6 hours daily for complex customer issues. ROI: 3 months based on support team hourly cost.”

Business value comes from demonstrable efficiency gains, not from architectural elegance.

Finding #4: Human Evaluation Remains Essential

The Data: 74% of production systems depend primarily on human evaluation, not automated benchmarks. Only 25% use formal evaluation frameworks.

This finding is perhaps the most shocking. The industry has spent years developing automated benchmarks, yet today’s production systems overwhelmingly rely on human judgment.

What This Means for Architects:

Besides automated tests, build human oversight into your architecture from day one.

The research reveals why: Automated metrics miss nuance, context, and real-world failure modes. They optimise for scores, not actual business value.

The Architectural Principle:

Design evaluation as a first-class architectural component:

- Define clear evaluation criteria aligned with business requirements

- Create feedback loops that improve agent performance over time

- Track the cost-benefit ratio of human review (time spent vs. errors prevented)

- Use evaluation data to continuously refine prompts and workflows

Finding #5: Reliability Is the Top Challenge

The Data: When asked about development challenges, reliability concerns were given. Teams struggle with ensuring consistent correctness across diverse inputs and edge cases.

This shouldn’t surprise us. But it does tell us something important: The hard part isn’t building an agent that works once. It’s building an agent that works reliably, repeatedly, across the messy reality of production data.

What This Means for Architects:

Don’t treat reliability as a post-deployment concern. Build verification, validation, and error handling into your core architecture from the beginning.

The Architectural Principle:

Reliability isn’t achieved through perfect models; it’s achieved through defensive architecture. Plan for failure modes explicitly:

- What happens when the model hallucinates?

- What happens when latency exceeds thresholds?

- What happens when input is ambiguous?

- How do we recover from partial failures?

Multi-Layered Reliability Strategy:

Layer 1: Input Validation

- Validate and sanitise inputs before they reach agents

- Implement rate limiting and throttling to prevent abuse

- Log inputs for audit and debugging

Layer 2: Output Verification

- Screen outputs for harmful or incorrect content

- Implement “LLM-as-judge” patterns using secondary models to validate primary outputs

- Use custom business logic validation for critical fields

Layer 3: Monitoring and Observability

- End-to-end tracing of all agent interactions

- (Real-time) alerting on anomalies

- Custom metrics for business-specific KPIs (accuracy rates, task completion times)

Layer 4: Graceful Degradation

- Design fallback mechanisms when confidence is low

- Queue complex requests for human review rather than forcing incorrect outputs

- Maintain clear escalation paths

The best production systems assume failure and design around it.

Finding #6: Internal Employees Are the Primary Users

The Data: most of the production agents serve internal employees, while less target external customers.

Organisations start with internal use cases where error tolerance is higher, and feedback loops are tighter. This is simply de-risking.

What This Means for Architects:

Start internal. Pilot with employees who can provide rapid feedback, tolerate occasional errors, and help refine the system before exposing it to customers.

Your internal teams are not just users, they’re co-developers. They understand the domain, they can articulate when the agent gets things wrong, and they’re invested in improving the tools they use daily.

The Architectural Principle:

De-risk deployment through staged rollouts:

- Start with a single department or team

- Measure success metrics

- Gather qualitative feedback from daily users

- Refine architecture based on real usage patterns

- Expand to adjacent use cases

- Only then consider external customer-facing agents

These internal use cases have clear success criteria, willing participants, and direct paths to productivity gains.

Finding #7: Custom Frameworks Over Third-Party Tools

The Data: 85% of detailed case studies build custom agent applications from scratch rather than using third-party frameworks.

This finding requires nuance. Teams aren’t rejecting all external tools, they’re avoiding generic agent frameworks that impose opinions about architecture and workflows.

Over the last few years, we have seen a boom in AI frameworks. The market now seems to be settling. Given that today’s successful agents are still limited in the number of steps they handle, how valuable are these frameworks in reality?

What This Means for Architects:

Teams prioritise control and avoid the learning curve of opinionated frameworks. On top of this, we’ve seen a fair share of frameworks disappear over the past year(s). One might expect this to stabilise in the future.

The Architectural Principle:

Your architecture should:

- Use cloud services for heavy lifting (model hosting, vector search, monitoring)

- Maintain ownership of orchestration logic and business rules

- Create abstractions that allow you to swap components as technology evolves

- Document architectural decisions to help future teams understand why custom approaches were chosen

You want full control over agent logic and workflows, while leveraging enterprise-grade infrastructure for security, compliance, and scaling.

What We’ve Learned

These seven findings paint a clear picture of production AI agents:

- Constrained, not autonomous (10 steps max)

- Prompted, not fine-tuned (70% use prompting alone)

- Productivity-focused, not innovation theater (73% target efficiency)

- Human-evaluated, not benchmark-driven (74% rely on human oversight)

- Reliability-obsessed, not feature-rich (defensive architecture matters)

- Internal-first, not customer-facing (52% serve employees)

- Custom-built, not framework-dependent (85% build from scratch)

The post From Hype to Reality: What Production AI Agents Actually Look Like in 2026 (part 1) first appeared on AzureTechInsider.