Leveraging GitHub Copilot for T-SQL Code Conversion: A Deep Dive into Code Explainability

January 9, 2025What’s Included with Microsoft’s Granted Offerings for Nonprofits?

January 9, 2025This is a part of my series on AI Foundry:

Happy New Year! Over the last few months of 2024, I was buried in AI Foundry (FKA Azure AI Studio. Hey marketing needs to do something a few times a year.) proof-of-concepts with my best buddy Jose. The product is very new, very powerful, but also very complex to get up and running in a regulated environment. Through many hours labbing scenarios out and troubleshooting with customers, I figured it was time to share some of what I learned.

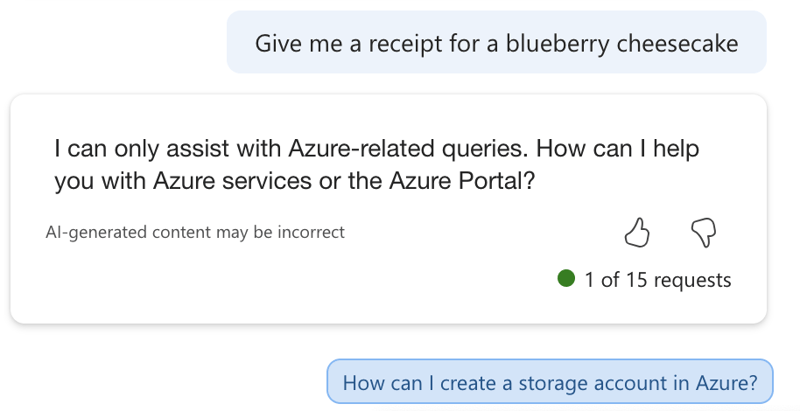

So what is AI Foundry? If you want the Microsoft explanation of what it is, read the documentation. Here you’ll get the Matt Felton opinion on what it is (god help you). AI Foundry is a toolset intended to help AI Engineers build Generative AI applications. It allows them to interact with the LLMs (large language models) and build complex workflows (via Prompt Flows) in a no code (like Chat Playground) or low code (prompt flow interface) environment. As you can imagine, this is an attractive tool to get the people who know Generative AI quick access to the LLMs so use cases can be validated before expensive development cycles are spent building the pretty front-end and code required to make it a real application.

Before we get into the guts of the use cases Jose and I have run into, I want to start with the basics of how the hell this service is setup. This will likely require a post or two, so grab your coffee and get ready for a crash course.

AI Foundry is built on top of AML (Azure Machine Learning). If you’re ever built out a locked down AML instance, you have some understanding of the many services that work together to provide the service. AI Foundry inherits these components and provides a sleek user interface on top and typically requires additional resources like Azure OpenAI and AI Search (for RAG use cases). Like AML there are lots and lots of pieces that you need to think about, plan for, and implement in the correct manner to make the secured instance of AI Foundry work.

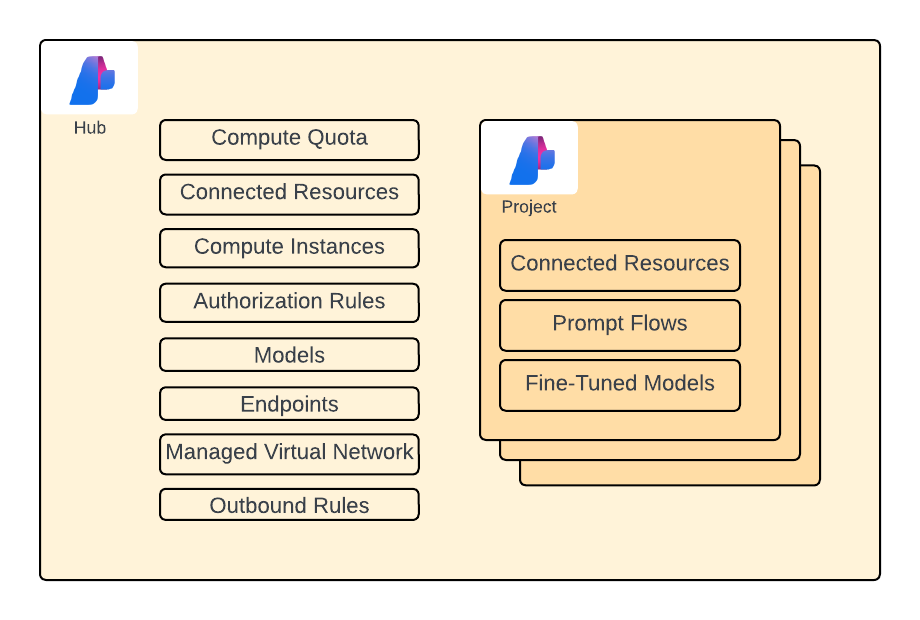

One specific feature of AML plays an important role within AI Foundry and that is the concept of a hub workspace. A hub is an AML workspace that centrally manages the security, networking, compute resources, and quota for children AML workspaces. These child AML workspaces are referred to as projects. The whole goal of the hub is to make it easier for your various business units to do the stuff they need to do with AML/AI Foundry without having to manage the complex pieces like the security and networking. My guidance would be to think about giving each business unit a Foundry instance that they can group projects of similar environment (prod or non-prod) and data sensitivity.

Ok, so you get the basic gist of this. When you deploy an AI Foundry instance, behind the scenes an AML workspace designated as a hub is created. Each project you create is a “child” AML workspace of the hub workspace and will inherit some resources from the hub. Now that you’re grounded in that basic piece, let’s talk about the individual components.

As you can see in the above image, there are a lot of components of this solution that you will likely use if you want to deploy an AI Foundry instance that has the necessary security and networking controls. Let me give you a quick and dirty explanation of each component. I’ll dive deeper into the identity, authorization, and networking aspects of these components in future posts.

- Managed identities

- There are lots of managed identities in use with this product. There is a managed identity for the hub, a managed identity for the project, and managed identities for the various compute. One of the challenges of AI Foundry is knowing which managed identity (and if not a managed identity, the user’s identity) is being used to access a specific resource.

- Azure Storage Account

- Just like in AML, there is a default storage account associated with the workspace. Unlike with a traditional AML workspace you may be familiar with, the hub feature allows all project workspaces to leverage the same storage account. The storage account is used by the workspaces to store artifacts like logs in blob storage and artifacts like prompt flows in file storage. The hub and projects isolate their data to specific containers (for blob) and folders (for file) with Azure ABAC (holy f*ck a use case for this feature finally) setup such that the managed identities for the workspaces can only access containers/folders for data related to their specific workspace.

- Azure Key Vault

- The Azure Key Vault will store any keys used for connections created from within the AI Foundry project. This could be keys for the default storage account or keys used for API access to models you deploy from the model catalog.

- Azure Container Registry

- While this is deemed optional, I’d recommend you plan on deploying it. When deploy a prompt flow there uses certain functionality to the managed compute, the container image used isn’t the default runtime and an ACR instance will be spun up automatically without all the security controls you’ll likely want.

- Azure OpenAI Service

- This is used for deployment of OpenAI and some Microsoft chat and embedding models

- Azure AI Search

- This can be used as an alternative to the Azure OpenAI Service. It has some additional functionality beyond just hosting the models such as speed and the like.

- Azure AI Search

- This will be used for anything RAG related. Most likely you’ll see it used with the Chat With Your Data feature of the Chat Playground.

- Managed Network

- This is used to host the compute instances, serverless endpoints and managed online endpoints spun up for compute operations within AI Foundry. I’ll do a deep dive into networking within the service in a future post.

- Azure Firewall

- If building a secured instance, you’re going to use a managed virtual network that is locked down to all Internet access via outbound rules. Under the hood an Azure Firewall instance (standard for almost all use cases) will be spun up in the managed virtual network. You interact with it through the creation of the outbound rules and can’t directly administer it. However, you will be paying for it.

- Role Assignments

- So so so many role assignments. I’ll cover these in a future post.

- Azure Private DNS

- Used heavily for interacting with the AI Foundry instance and models/endpoints you deploy. I’ll cover this in an upcoming post.

Are you frightened yet? If not you should be! Don’t worry though, over this series I’ll walk you through the pain points of getting this service up and running. Once you get past the complex configuration, it’s a crazy valuable service that you’ll have a high demand for from your business units.

In the next post I’ll walk through the complexities of authorization within AI Foundry.